Top 9 Lead Scoring Models Using Product Usage and Intent Data

What lead scoring with product usage and intent data means in B2B SaaS

Lead scoring assigns numeric values to potential buying signals. Traditionally, lead scores rely on activities like form submissions, firmographics, and email interactions. Product-led lead scoring advances this by incorporating first-party product usage and third-party intent signals, creating a more accurate reflection of genuine customer interest rather than just stated intent.

Two factors matter. Individual users show interest through actions, while organizations, referred to as accounts here, demonstrate intent through activities like purchasing, expanding, or cancelling subscriptions. It’s important to score at both levels. Ensure there’s a clear method to aggregate user-level scores up to the account, and always consider the recency of actions, recent behaviors should carry more weight than actions from the distant past.

Score individuals. Qualify accounts. Let outdated actions fade. Prioritize current engagement.

Prepare your CRM and data model for behavioral lead scoring

Effective lead scoring is built on clean, unified data. Start by mapping all key events to a canonical user and account, using a reliable account identifier. Establish how your systems will handle workspaces, subsidiaries, and partner domains, and maintain detailed documentation for data lineage to build confidence and transparency with your sales team.

Identify and define critical events: sign-ups, user invitations, key feature usage, integrations, billing, and limits reached.

Normalize touchpoints from email, live chat, support tickets, and website interactions into a unified timeline.

Establish a recency window for scoring activity, typically 7, 14, or 30 days.

Include seat count and user roles as context for every event.

If your customer data, including support interactions, emails, and chat history, is still scattered across different platforms, learn how to consolidate this information into one comprehensive customer timeline. This will sharpen your models and enable smoother handoffs between teams.

Centralize your scoring model in the workspace your teams use most. You can calculate scores in a unified platform like Routine, or within established CRMs such as Salesforce or HubSpot. For context, Notion can store model documentation, while CRMs excel at routing leads efficiently at scale.

9 lead scoring models that use product usage and intent signals

1) PQL feature-adoption model for high-value actions

Assign greater weight to feature activities that strongly correlate with eventual revenue. Actions like enabling SSO, setting up API keys, or exporting data are especially meaningful. Apply binary points for task completion and introduce a mild recency decay to maintain relevance.

Inputs: feature flags, user roles, event timestamps.

Rule: +25 for enabling SSO in the last 14 days; +15 for API key usage.

Outcome: Users qualify as PQLs at 60 points; accounts at 120.

2) Activation milestone model from sign-up to “Aha!”

Track each customer’s journey to their first moment of real value. Assign points to each completed milestone, rewarding quicker completion over slower progress.

Inputs: account creation, first project, initial integration, first item shared.

Rule: +10 points for every milestone; multiply score by 1.5 if completed within 48 hours.

Outcome: Sales-assist engagement when three milestones are reached within 72 hours.

3) Consumption intensity model using frequency and depth

High-frequency use is a strong readiness signal. Tally active days and measure the depth of engagement per session. Weight increases in seat count within a domain.

Inputs: daily active users (DAU), weekly active users (WAU), actions performed, objects created, seats added.

Rule: Score = (DAU/WAU × 20) + 2 × seats added in the previous 14 days.

Outcome: Automatically trigger outreach at 25 points or higher.

4) Integration depth model signaling commitment

Connecting with other essential systems increases customer commitment and switching costs. Consistently assign higher scores for integrating with core systems, and recognize two-way syncs as particularly committed engagements.

Inputs: integration count, type of integration, sync direction.

Rule: +20 for CRM, +15 for SSO, +10 for storage, +5 for all other integrations.

Outcome: Assign priority to accounts scoring 35 or more, provided a CRM integration exists.

5) Seat and collaborator growth model at the account level

Growth within an account, such as adding new users or editors, often correlates with larger deals. Score new invitations, role diversification, and consistently active contributors. Deduct points for unused seats.

Inputs: net seat changes, invite velocity, user roles, active editors.

Rule: +3 for each new seat added in the past week; −1 for every dormant seat.

Outcome: Initiate expansion processes if there’s a net gain of eight seats within a month.

6) Pricing and procurement intent model from web signals

Some online actions signal strong buying intent, visiting the pricing page, reading security information, reviewing SLAs, or using budget calculators. Factor in engagement depth and repeat visits for higher accuracy.

Inputs: pricing page views, security documentation access, SLA page visits, calculator engagement.

Rule: +30 for a pricing page view with at least 60 seconds spent; +15 for using budget calculators.

Outcome: Route leads to an account executive once intent signals reach 40 points in total.

7) Account-based third-party intent model for named targets

Combine your product usage metrics with insights on customer intent collected from third-party data providers like 6sense or Bombora. Focus on prospects showing both high on-platform activity and strong network intent, while constraining false positives with strict recency filters.

Inputs: relevant topics, intent surges, ad interactions, competitor comparison signals.

Rule: +20 for a topic surge; double the score if an active user exists within the account’s domain.

Outcome: Launch ABM plays for accounts that match intent criteria.

8) Champion movement and alumni model

Pay close attention when power users or advocates, sometimes called champions, change companies. When these individuals resurface at new domains, prioritize them, especially if their prior NPS was high.

Inputs: change in email domain, historical account engagement, previous NPS, user seniority.

Rule: +40 for alumni sign-up; add +10 if prior NPS was 9 or higher.

Outcome: Guarantee executive outreach within 24 hours.

9) Time-decay blended model to stabilize noise

Synthesize multiple signals with weighted importance and apply time decay to keep results current. Recent actions are more influential, while older actions quickly lose impact. Keep the calculation straightforward for ease of maintenance.

Inputs: weighted outputs from all prior models; 14-day score half-life for decay.

Rule: score(days/14)= score t× 0.5 0.

Outcome: Consistently classify accounts into A, B, or C tiers based on thresholds.

How to deploy these lead scoring models across your revenue stack

Start deployment with one product team instead of the entire company. Validate model accuracy and collect feedback from sales on a weekly basis, then expand to additional segments as needed.

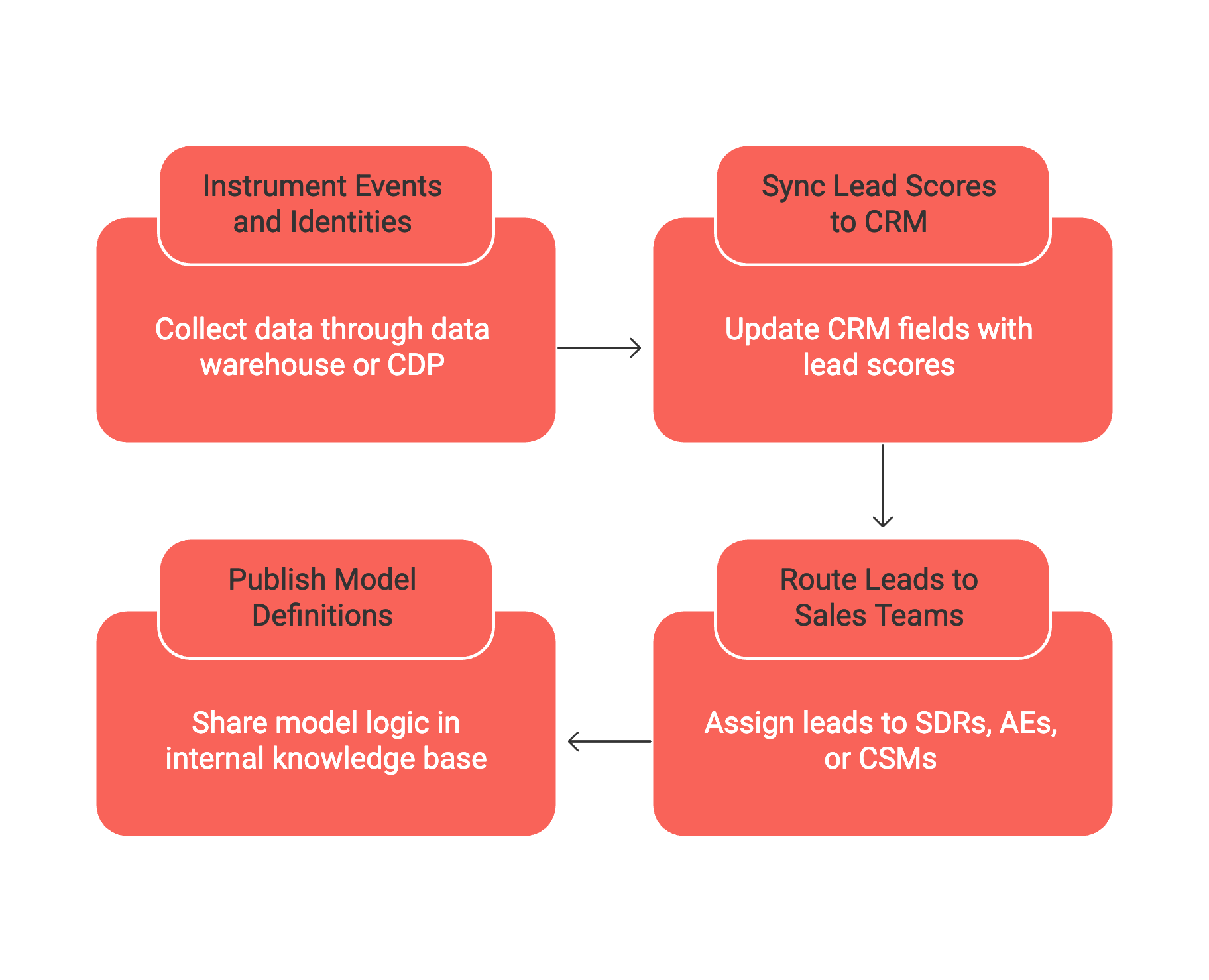

Instrument all relevant events and identities through your data warehouse or CDP.

Sync updated lead scores to CRM fields, ensuring clarity on user and account segmentation.

Route leads to SDRs, AEs, or CSMs in accordance with your playbooks and SLAs.

Publish model definitions and scoring logic in your internal knowledge base to maintain transparency.

Bridge lead scores to outreach and enrichment workflows. This guide to sales automations that shorten response time pairs well with PQL-based lead assignment. For platform selection, weigh the pros and cons in “All-in-One Workspaces vs Dedicated Project Tools: Which Serves Your Business Best?” before finalizing where your scoring models will live.

Governance, bias, and compliance for lead scoring in 2026

Establish safeguards. Limit the influence any single data source can have on the overall lead score to ensure balanced results. Review unusual cases with both sales and marketing on a monthly cadence, and always apply filters so only consented data is included for relevant geographies.

Document every scored field, its owner, and retention period.

Allow sales reps, and, when appropriate, prospects, to request and receive explanations for scores.

Remove protected attributes (and proxies) from scoring inputs.

Log all score overrides, with dates and names of approvers.

KPI instrumentation and reporting for product-led lead scoring

Demonstrate your models’ effectiveness with clear metrics. Focus on tracking both conversion rates and velocity, and always monitor overall volume alongside precision.

PQL-to-SQL conversion rates by market segment and acquisition channel.

Average time to first sales engagement after scoring threshold is met.

Closed-won rate and average deal size for scored cohorts vs those unscored.

Analyzed volumes of false positives and false negatives via sales team feedback.

Keep reporting dashboards and definitions consistent across quarters. Avoid silent or unexplained metric changes, and always inform executive teams of definition adjustments before implementation.

Common pitfalls to avoid when scoring based on usage

Don’t let less meaningful or surface-level events dominate your models. Events such as a user simply logging in are not as significant as a more vested action like exporting data for analysis, hence, each should be scored appropriately in the model. Transparent logic beats black-box scoring you can’t explain to reps.

Scoring only user-level behaviors, while ignoring the broader account context.

Overfitting models to last quarter’s most successful deals.

Allowing routing delays that miss genuine buying signals.

Losing track of responsibility for ongoing model updates.

Final recommendations for CXOs planning a scoring revamp

Launch with two models: one grounded in product usage, the other focused on web intent. Make your scoring rules public and train sales and operations thoroughly. Review preliminary results every week for a quarter before adding a third model to fill any apparent gaps.

Centralizing your scoring in a connected workspace, such as Routine, can help align projects, CRM, and documentation. Dedicated platforms like Salesforce or HubSpot remain strong choices for scaled lead routing. Choose the best platform based on your team’s governance needs and day-to-day workflow, not the latest industry trends.

FAQ

What is product-led lead scoring, and why does it matter?

Product-led lead scoring enhances traditional methods by integrating product usage and intent data, offering a more precise view of a prospect's genuine interest. Ignoring this approach could mean relying on superficial metrics and losing out on deeper sales insights.

How can I ensure my lead scoring model remains accurate and relevant?

Maintain accuracy by regularly updating your model with recent data and feedback, while also setting clear recency windows for lead activity. Blindly sticking to once-relevant data could lead to skewed scoring and missed opportunities.

How does Routine support a unified approach to lead scoring?

Routine allows teams to centralize scoring models, maintain documentation, and ensure seamless data synchronization across platforms. Failing to centralize could mean fragmented insights and inefficient lead management.

Why is it important to score both individual users and accounts?

Scoring users individually captures granular interest, while account-level scoring reflects broader organizational intent. Neglecting either level risks missing critical engagement cues and undermining sales strategies.

What are the risks of overfitting lead scoring models to past successes?

Overfitting leads to models that excel at predicting past outcomes but fail at identifying future opportunities. This shortsightedness can stifle growth and limit adaptability to changing market conditions.

How do time-decay models benefit lead scoring?

Time-decay models keep scores dynamic by de-emphasizing older actions, ensuring current behaviors influence decisions. Without this approach, models might overvalue stale data and lead to inefficient prioritizations.

What pitfalls should be avoided when implementing lead scoring based on usage?

Avoid giving undue weight to trivial user actions, and ensure broader account context is considered. Allowing superficial signals to dominate can mislead sales strategies and undermine decision-making.